Making smoke rub for BBQ

Enjoy the videos and music you love, upload original content, and share it all with friends, family, and the world on YouTube.

Mow to make babyback ribs – May 2026

Done simply with ribs that were on sale.

Replacing the CMOS battery and doing the “100Ω fix” on a QNAP TS-451+

Trying to resolve a BIOS boot issue.

This didn’t fix the core storage corruption issue, but I did get the ability to boot into BIOS again.

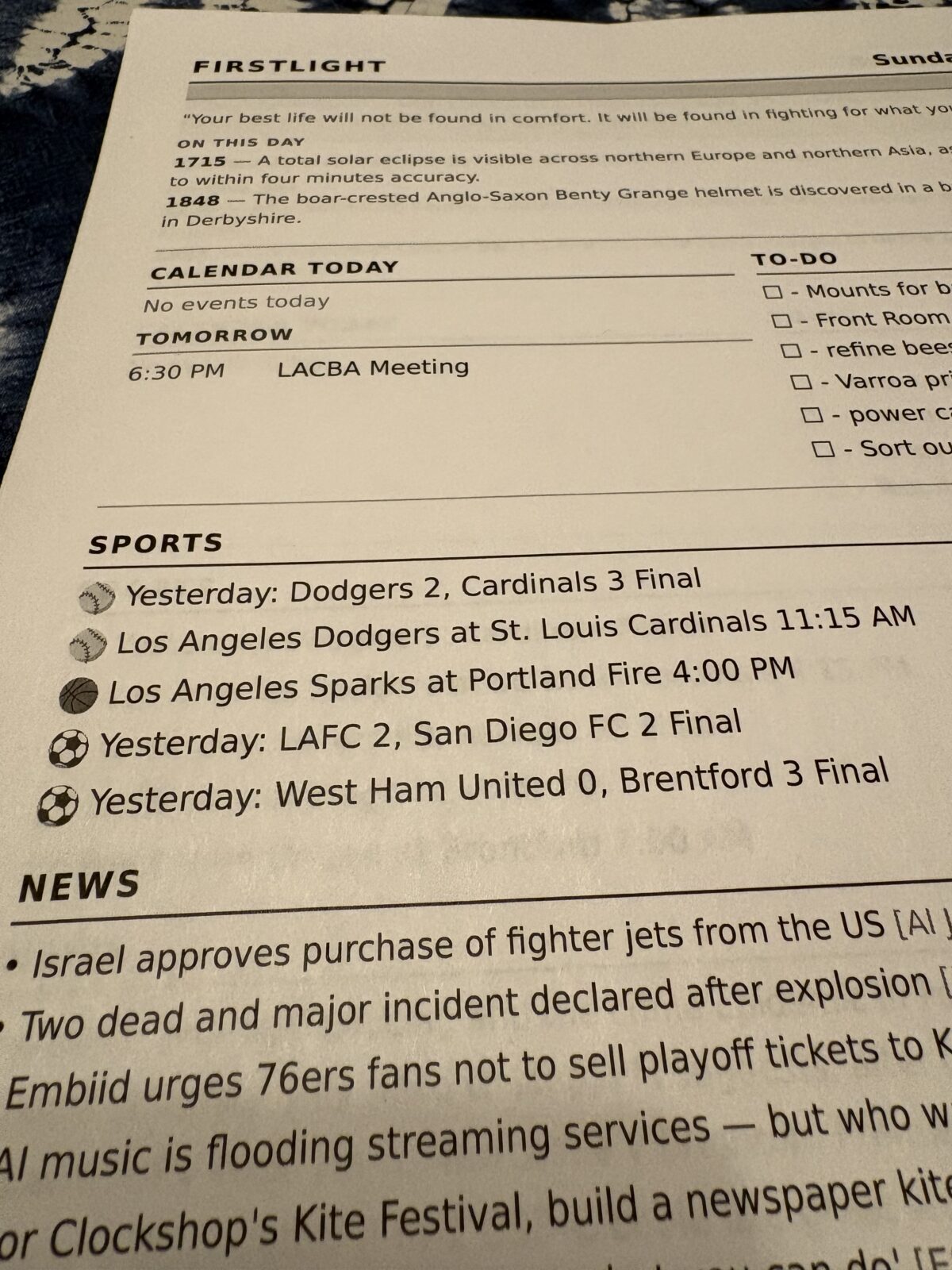

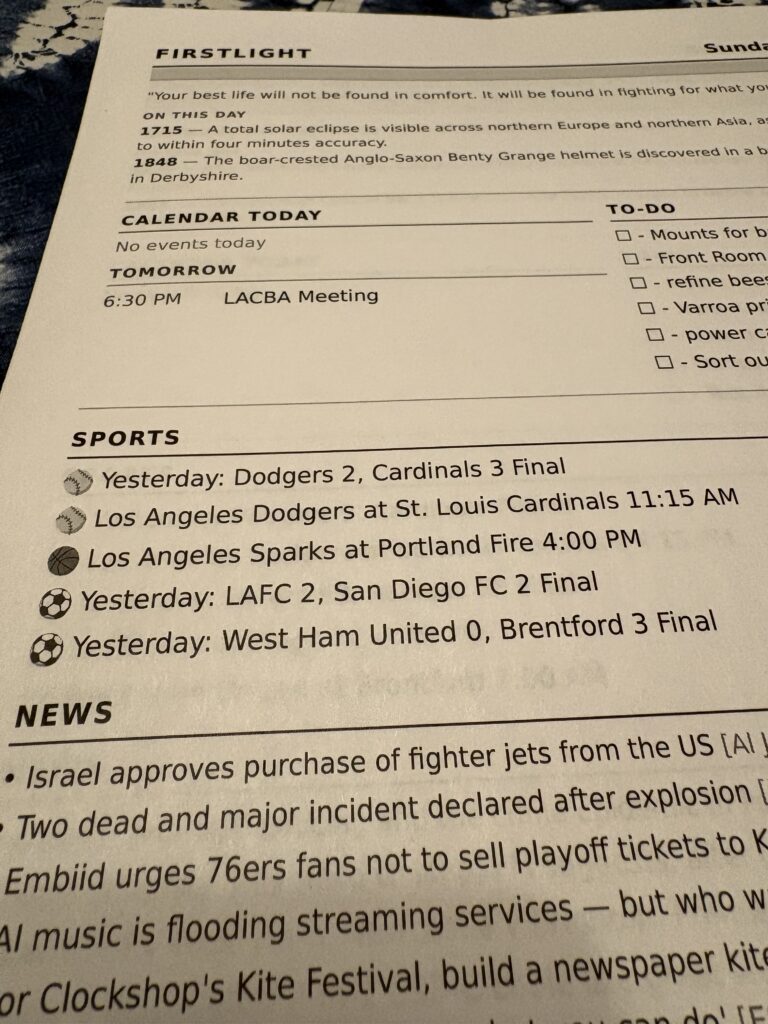

firstlight – you can’t doomscroll a piece of paper

I’d reached the point where reaching for my phone first thing in the morning felt obviously bad and I just couldn’t stop. The scroll wasn’t even rewarding anymore, it was a reflex. I wanted a different default.

You can’t doomscroll a piece of paper.

We still read a physical newspaper in the morning, because I’m old and old habits die hard. Newspapers are great for reading deeply and discovering things outside your algorithmic bubble, but they’re terrible at surfacing the specific information you actually care about day-to-day.

I built a small app called Firstlight. Every morning, before I wake up, it prints a single page of the stuff I actually want to know: the weather, what’s on my calendar, last night’s scores, a few news headlines, and my to-do list.

With a piece of paper I can make notes or check things off my todo later in the day.

A printed page is a nice default.

It doesn’t notify me. There are no pop-ups. It’s the information I chose.

It also turns out to be the right amount of information. No one needs the entire internet hitting them at 6AM.

The page reflects what I want to see each morning, and the code is simple enough to adapt to your own preferences.

Here’s what’s on mine:

Weather – today’s forecast, hourly breakdown, air quality, and a heads-up about rain in the next few days

Calendar – today’s events from Google Calendar

Sports – last night’s scores and today’s game times from teams I follow across MLB, NFL, NHL, WNBA, NBA, NWSL, MLS, and the Premier League

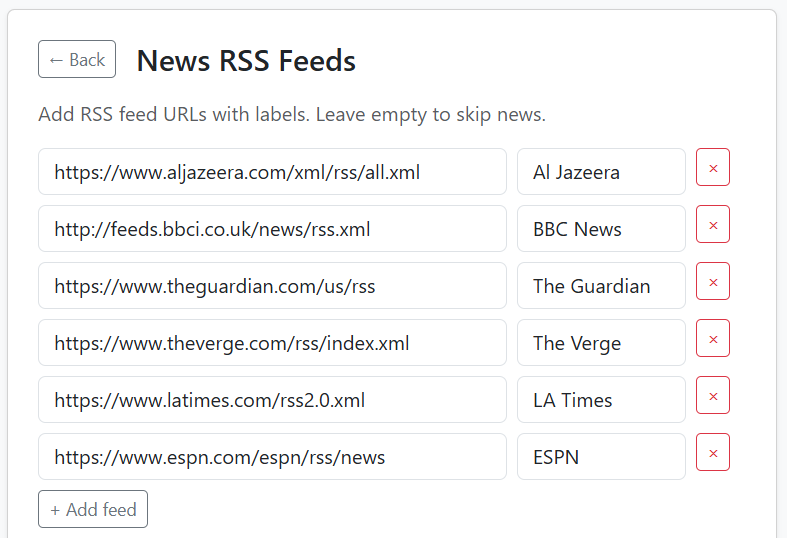

News – headlines from a handful of RSS feeds I picked

To-dos – a short list you can manage in the web UI or point to a text file somewhere

Daily – A quote and an “on this day in history” entry, for fun

Everything sources from free APIs that don’t require an account: Open-Meteo for weather, ESPN for sports, ZenQuotes, Wikipedia, plain RSS. Google Calendar is the one optional integration that needs OAuth, and you can skip it entirely.

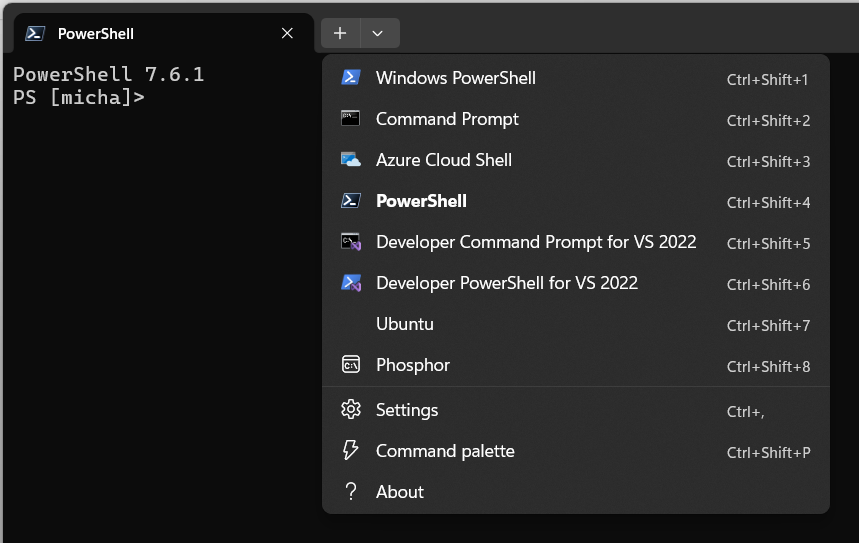

Firstlight runs in Docker on whatever always-on machine you have lying around — a NAS, a home server, a Raspberry Pi. In my case it’s a QNAP NAS. Once a day, a scheduler inside the container fetches everything, renders a single-page PDF, and sends it directly to my network printer over IPP. No drivers, no cron, no external services.

There’s a web UI for previewing the digest, managing to-dos, browsing past digests, and reprinting on demand.

Setup is a 10-step wizard in the browser. The only one with any real friction is adding Google Calendar, because Google’s OAuth process can be confusing.

Worth mentioning: I wrote essentially none of this code by hand. The whole project was built using Claude Code with the Superpowers plugin, which adds structured workflows for brainstorming, planning, TDD, and debugging. The original design spec and implementation plan are checked into docs/superpowers/ if you want to see how the sausage was made.

I mention this not as a flex but because I think it matters. This is the kind of project: small, personal, self-hosted, with no users to impress, that scratches a personal itch, that’s now genuinely easy to build. A few years ago I could have never built this myself.

It’s built for people comfortable running self-hosted things. You need to be okay with Docker, a terminal, and a config file. The wizard handles the actual setup, but if something breaks, a modern LLM coding assistant is surprisingly good at helping diagnose any issue.

Source is at github.com/cruftbox/firstlight.

The README walks through deployment for both generic Docker hosts and QNAP specifically.

If your phone has stopped feeling rewarding in the morning and you’ve got an always-on machine somewhere, give firstlight a try.

Trapping Small Hive Beetles in Diatomaceous Earth

Small Hive Beetles are pest to bee hives. This shows how effective using diatomaceous earth can be in beetle traps.

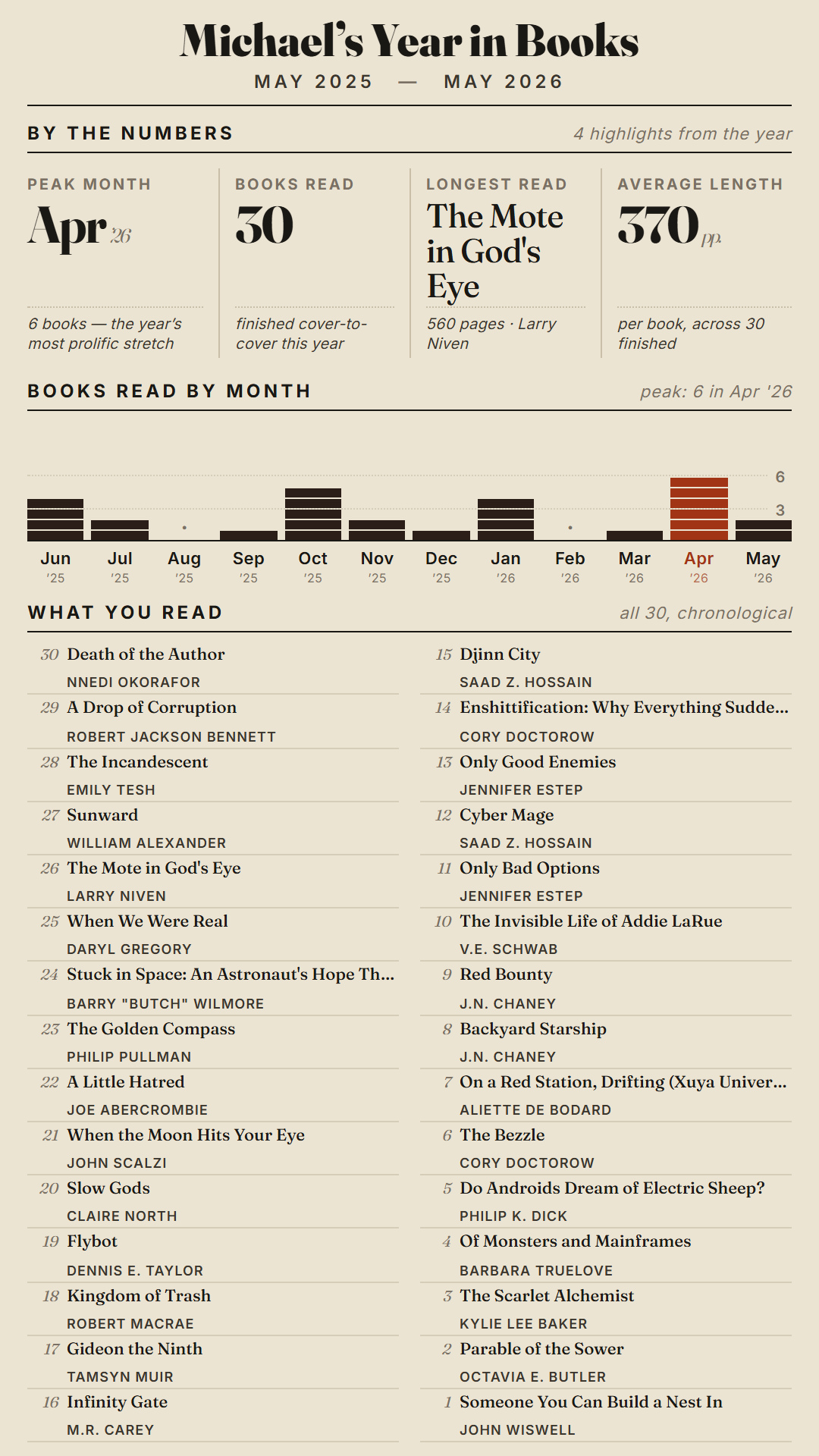

Year in Books – Goodreads Tool

I like reading and use Goodreads to help me keep track of books I’ve read. Goodreads gives you a year-end summary, which is great, but it only shows up in December. I wanted something I could generate any time that looked at the last twelve months, regardless of time of year.

So I built Year in Books, a new feature in my goodreads-tools project. It pulls your Goodreads read shelf via RSS, windows the last 12 months on a rolling basis, looks up genres through the Google Books API, and renders everything into nice graphics.

The output comes in four flavors: straight HTML, a PDF, a full-width web PNG, and a 9:16 social media PNG sized for Instagram, Bluesky, or Mastodon. HTML is generated and rendered into the PDF and PNGs. There’s a simple web UI to download the various versions.

For my kind of brain, these kinds of stats are interesting. I can see how my reading lines up with other events in my life.

This layout is what I like, but the code is here to modify as you might see fit:

https://github.com/cruftbox/goodreads-tools

The Yellow Hive got robbed out

The weak Yellow Hive got robbed out by another bee colony.

Robbing is when another colony comes and steals/eats the honey in a weak hive. I noticed the sign of chewed up wax cappings and did an inspection.

During the robbing here is some fighting, where bees die, but usually the remaining bees and queen abscond. Absconding is when bees leave a hive because it’s unsuitable due to damage, moisture, mites, pests, or being robbed. They fly off to find a new home. A low success rate for an already weak colony.

Using IKEA PÄRKLA storage cases to hold bee hive boxes

I saw this on the internet and decided to give it a try.

Inexpensive storage cases that just happen to fit deep and medium bee boxes.

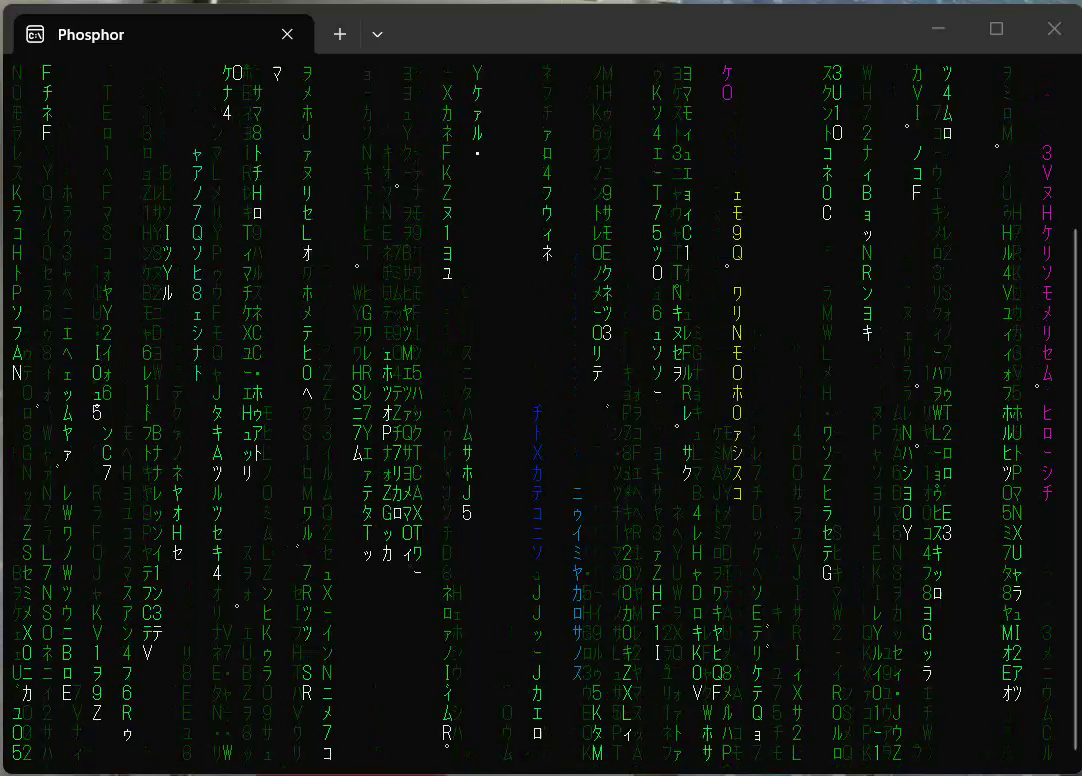

Phosphor – Matrix-style digital rain

While walking the dog I wondered how hard it would be to recreate the Matrix digital rain, a visual that’s lived rent-free in my head since 1999.

1999 seems like both yesterday and a million years ago.

Turns out it was pretty easy for Claude. With a brief description prompt of what I wanted, it got to work. I added the idea of occasional RGB ‘threads’ falling through for a little flourish.

I think using the superpowers agent harness was a bit of overkill, but it helped me learn the process.

My desktop computer runs Windows, so Claude decided to make a .Net executable that runs inside terminal. I don’t even have to run an app. It’s just a pulldown inside of terminal.

An idea while walking the dog turned into a working app before lunch.

Creating things on a whim feels a little magical.

The code is up at Github: https://github.com/cruftbox/phosphor

Refining Beeswax

How I refine beeswax from old honeycomb and cappings.

I built a steam wax melter from a wallpaper steamer and some old bee boxes.

Works great for getting the wax out of nasty old comb.