AI tools don’t always provide the correct answers, so I often find myself cross-referencing multiple models to get a wider range of perspectives. Manually copy-pasting the same prompt into Claude, ChatGPT, and Gemini quickly gets tiresome.

The three main LLMs I use are Claude, ChatGPT, and Gemini. They all provide APIs that make this pretty easy to build an app.

Working with Claude Code, I built a small app that runs locally to ask all the LLMs the same question and have them discuss the answers and provide a consensus view. It’s similar to asking advice from a group chat of friends. Everything is stored locally on your computer.

My highly imaginative name for the app is llm-discussion.

It wasn’t too hard to build. Took a little time to set up the accounts correctly to get the API keys, but it wasn’t difficult. The whole thing is only about 325 lines of Python.

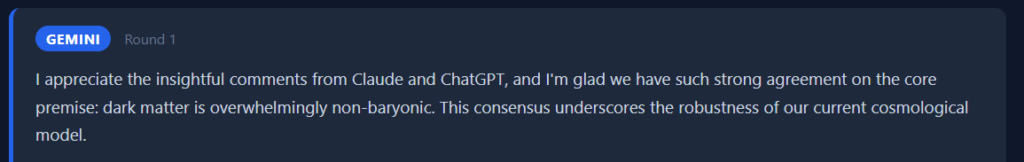

I asked all three about a couple of topics like vitamins and cosmology. The discussion and consensus surprised me with how deep the answers went. Also, they are exceedingly, painfully polite to each other.

The consensus includes the key points, what they agree upon, and most interestingly, what they don’t agree upon.

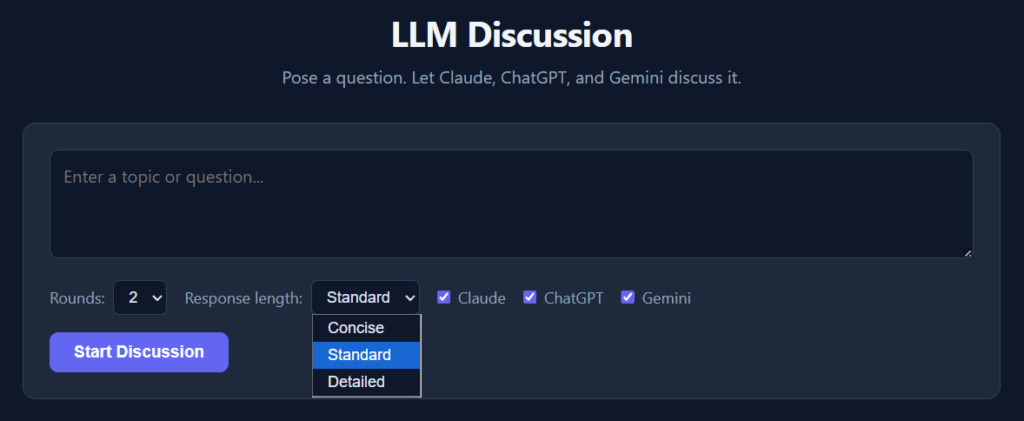

I put in a few options. You can choose the number of rounds of discussion and which LLMs you want included. Each round feeds the previous responses back to the models so they can critique or refine their answers.

The LLMs can be a bit verbose, so there’s a pulldown to choose concise, standard, or detailed answers.

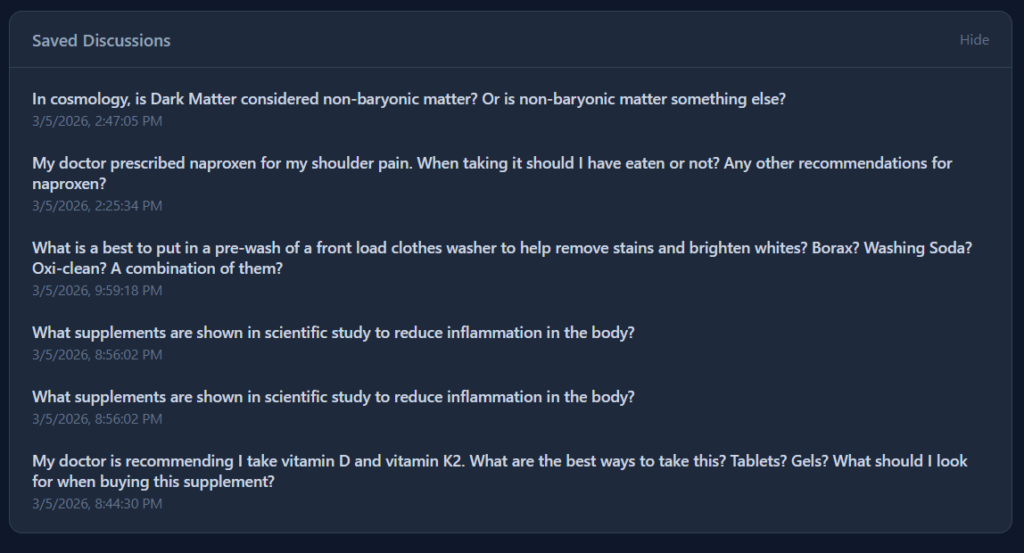

You can save the discussions as well. All locally on your computer.

The code is on GitHub here: https://github.com/cruftbox/llm-discussion

I use Windows but the code should run on macOS or Linux easily as the app is just basic Python scripting and Flask for the web UI. It would be easy to add other models like Deepseek, Llama, Mistral, or other API providers.

The tokens do cost money on Claude and ChatGPT, but it’s pennies. Gemini currently has a free API tier with a cap that I haven’t managed to hit yet.

Just another example of using Claude Code ‘to scratch that itch’ and make small things in my nerd life easier.

One thought on “Getting a better answer by asking three AIs at once : llm-discussion”

Comments are closed.